A load balancer is a critical component of any cloud-based infrastructure, as it ensures that incoming requests are distributed evenly across a group of servers or other resources. This helps to improve the performance, scalability, and reliability of the system, as it reduces the risk of any single server becoming overwhelmed and allows the workload to be distributed more efficiently.

Use of load balancers started back in 1990s in the form of hardware appliances that could be used to distribute traffic across a network. Today, load balancers form a crucial piece of infrastructure on cloud and users have a variety of load balancers to choose from. These load balancers can be categorized into physical (where load balancer is developed on top of architecture of physical machines) and virtual (where it is developed on top of network virtualization)

Before we look into the details of each type of load balancer, lets discuss the function of load balancers in an architecture:

Health Check

Health check is the corner stone of the entire load balancing process. The purpose of a load balancer is to distribute traffic to a target group of servers. But that can only be successfully done if the underlying servers are functioning well and are able to receive and respond to the traffic request. Health check ensures that the underlying servers are up and running and are able to accept incoming traffic in a ‘healthy’ manner.

Health checks are initiated as soon as a server is added to the target group, and usually takes about a minute to complete. The checks are then performed periodically (which can be configured) and can be done in two ways:

Active Health Check: In this, the load balancer periodically sends requests to servers in the target group to check the health of each server as per the health setting for the target group. After the check is performed, the load balancer closes the connection which was established for health check.

Passive Health Checks: In this, the load balancer does not send any health check requests but it instead observes how target servers respond to connections which they receive. This check enables the load balancer to detect a deteriorating server whose health is falling so it can take action before the server is reported as unhealthy.

SSL Decryption

Another important function of a load balancer is to decrypt the SSL encrypted traffic that it receives, and forward the decrypted data to the target servers. Secure Socket Layer (SSL) is the standard technology for establishing encrypted link between a web browser and a web server. Encryption via SSL is the key difference between HTTP and HTTPs traffic.

When load balancer receives SSL traffic via HTTPs, it has two options:

Option 1: Decrypt the traffic and forward to the target group servers. This is called SSL termination since the decrypted state of traffic ends at the load balancer. With this, all the servers receive decrypted traffic and the servers don’t have to expend extra CPU cycles required for decryption. This results in lower latency in generating response. However, since the decryption happens at load balancer level, the traffic between load balancer and the target servers is unencrypted and hence is vulnerable. This risk is greatly reduced when the load balancer and the target servers are hosted in the same data center.

Option 2: Do not encrypt the data and forward it to the target servers where the server decrypt it themselves. This is called SSL Passthrough. This uses more CPU power on the target web servers but results in extra security. This method is useful when the load balancer and target group are not located in the same data center.

Session Persistence

End user’s session information is often stored locally in the web browser. This helps users receive consistent experience in the application. For eg: in a shopping app, once I add items to a cart, the load balancer must send my requests to the same server else the cart information will be lost. Load balancers identify such traffic and ensure session persistence to the same server till the transaction is completed.

Routing Algorithms

Load balancer routes the incoming traffic to target servers as per various algorithms which can be configured on the load balancer console:

Round Robin: Traffic is directed to each server sequentially. Useful when the target servers are of equal specification and there are not a lot of persistent connections.

Least Connection Method: Directs traffic to the server with the least number of active connections. Useful when there is high number of persistent connections.

Least Response Time Method: Directs traffic to the server with the fewest active connections and the lowest average response time.

IP Hash: The client IP address determines which server receives the request.

Hash: Distributes traffic based on a key that user defines, such as client IP or the request URL.

Scaling

Finally, a load balancer must be able to scale up and down as required. This applies to load balancer’s own specification as well as catering to a real time increase and decrease in number of target servers. Virtual load balancers can easily scale up and down as per the incoming traffic management requirements.

For managing the target servers scaling, load balancers are tightly integrated with auto scaling component of the platform to add and remove servers from the target group as traffic increases or decreases.

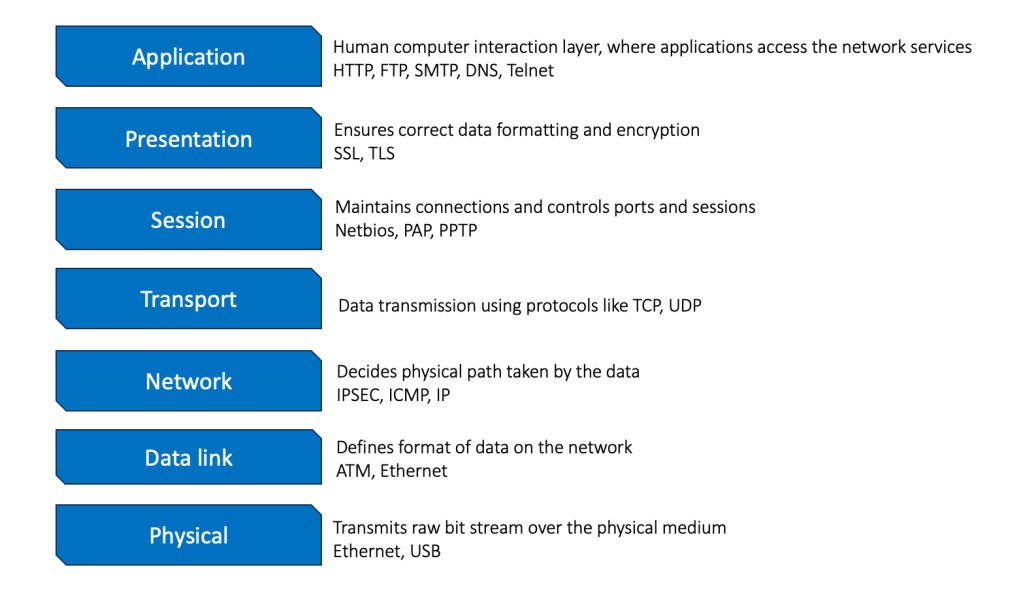

A quick word on the OSI model

The OSI model is the universal language for computer networking. Its is based on splitting the communication system into seven abstract layers, each one stacked upon the last. Each layer has a specific function to perform in networking. It is useful to revise what we know about the 7 layers before we discuss different types of load balancers.

Different types of load balancers

There are different types of load balancers available, each of which has its own specific features and capabilities. The main types of load balancers are the classic load balancer, application load balancer, and network load balancer. We will refer to Alibaba Cloud server load balancers for features and limitations.

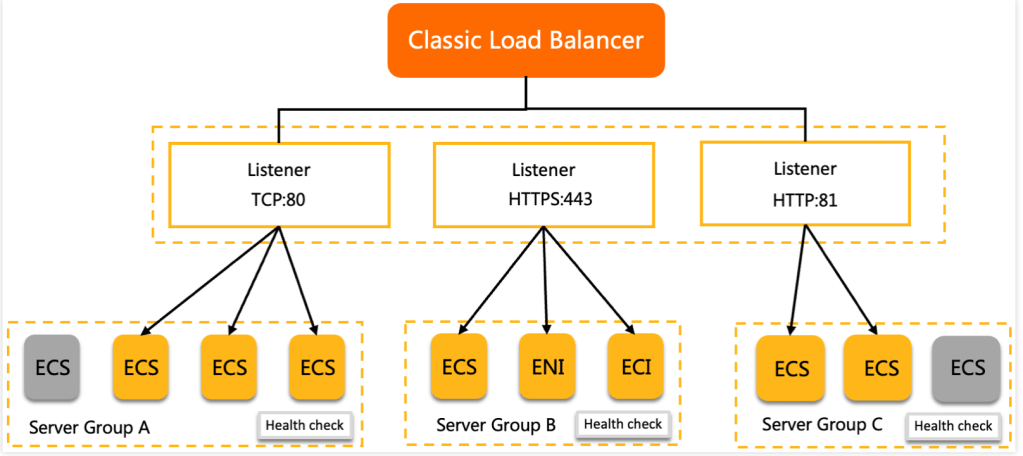

Classic load balancers

CLB supports TCP, UDP, HTTP, and HTTPS. CLB provides advanced Layer 4 processing capabilities and basic Layer 7 processing capabilities. CLB uses virtual IP addresses to provide load balancing services for the backend pool, which consists of servers deployed in the same region. Network traffic is distributed across multiple backend servers based on forwarding rules. This ensures the performance and availability of applications.

- Alibaba Cloud CLB can handle up to one million concurrent connections and 50,000 QPS per instance.

- CLB supports only domain name and URL based forwarding.

- CLB can use ECS instances, ENI, Elastic container instances as backend server type.

Classic load balancers is deployed on top of architecture of physical machines which has some advantages and disadvantages:

Pros:

The underlying instances with specific processors used for CLB are specialized for receiving high traffic and managing it. This results in higher throughput of the system.

One type of load balancer to manage both layer 4 and layer 7 (to an extent) load balancing.

Cons:

Inability to Scale: Auto scaling for the load balancer instance can be difficult as it is tied to a specific instance.

Fixed cost: This could be a negative if the traffic is unpredictable resulting in lower utilization of the fixed priced instance.

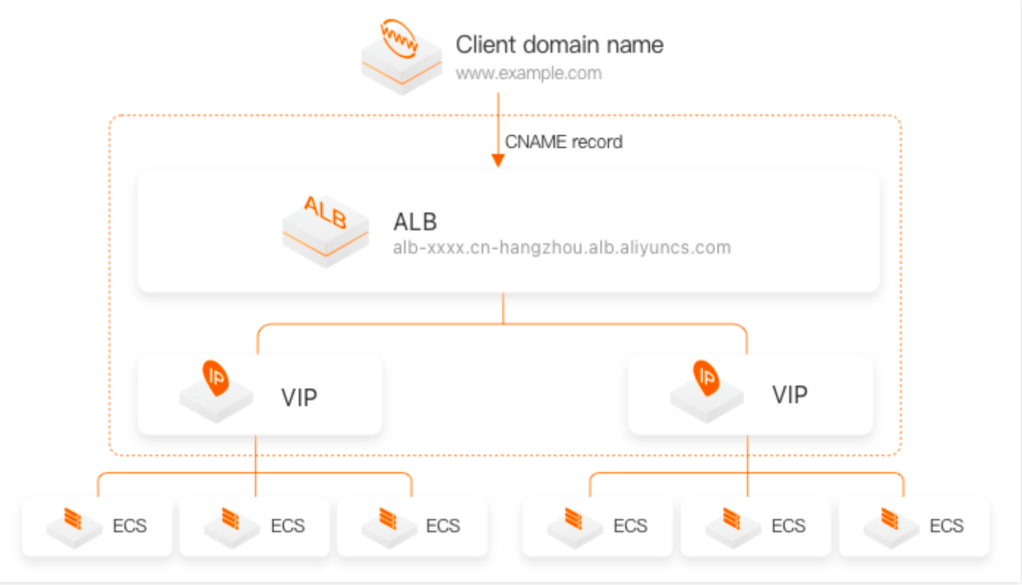

Application Load Balancer

Application load balancers, also known as layer 7 load balancers, are a more recent development in the world of load balancing. They are similar to classic load balancers in that they operate at the application layer of the OSI model, but they offer a number of additional features and capabilities. ALB are able to examine the contents of the incoming request in order to route the request to the appropriate server. This makes it ideal for environments where the workload is heavily focused on application-level processing, such as web applications or microservices. Application load balancers can also automatically scale to meet the demands of the application, and they can provide advanced routing capabilities based on the content of the request. For example, an application might have different microservice for iOS and Android users, or different microservice for users coming in from different regions. As per this content, ALB can direct these users to appropriate destination.

Alibaba Cloud ALB is optimized to balance traffic over HTTP, HTTPS, and QUIC UDP Internet Connections (QUIC). ALB is integrated with other cloud native services and can serve as ingress gateway to manage traffic.

ALB supports both static and dynamic IP and the performance varies on the basis of the IP mode

| IP | Maximum Queries per second (QPS) | Maximum connections per second (CPS) |

| Static | 100,000 | 100,000 |

| Dynamic | 1,000,000 | 1,000,000 |

- ALB is developed on top of the network function virtualization (NFV) platform and supports auto scaling.

- It supports HTTP rewrites, redirects, overwrites, and throttling

- It can support ECS instances, ENI, Elastic container instances, IP addresses and serverless functions as backend server type.

- It supports traffic splitting, mirroring, canary releases, and blue-green deployments

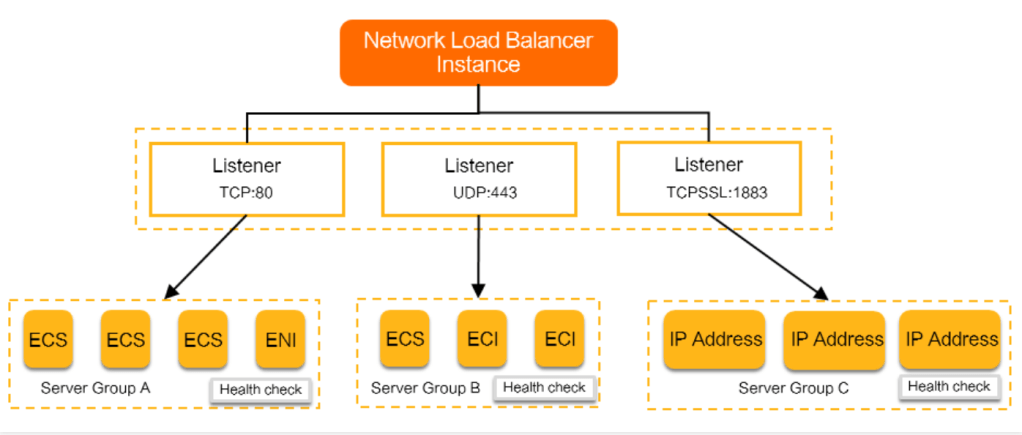

Network Load Balancers

Network load balancers, also known as layer 4 load balancers, operate at the network layer of the OSI model. This means that they are responsible for routing traffic based on the IP addresses of incoming packets, but they do not inspect the contents of the packets themselves. Network load balancers are often used to distribute traffic to applications that use the TCP or UDP protocols, but they do not provide the advanced routing capabilities of classic or application load balancers.

NLB is often used in environments where the workload is heavily focused on network-level processing, such as load balancing for a network of web servers or in gaming scenario with very high traffic. An NLB instance supports up to 100 million concurrent connections and 100 Gbit/s throughput. You can use NLB to handle massive requests from IoT devices.

You do not need to select a specification for an NLB instance or manually upgrade or downgrade an NLB instance when workloads change. An NLB instance can automatically scale on demand. NLB also supports dual stack networking (IPv4 and IPv6), listening by port range, limiting the number of new connections per second, and connection draining.

- Alibaba Cloud NLB can also be used to balance on premise servers in a different region than the NLB.

- NLB is developed on top of the NFV platform instead of physical machines and supports fast and automatic scaling

- It can support up to 100 million concurrent connections per instance

- It can support ECS instances, ENI, Elastic container instances and IP addresses as backend server type.

- NLB supports cross-zone disaster recovery and serves as an ingress and egress for both on-premises and cloud services.

Pricing comparison

Pricing for server load balancers on Alibaba cloud is calculated on the basis of a unit of consumption called Load Balancer capacity unit (LCU). The definition of LCU for ALB, NLB and CLB along with the price is as follows:

| Instance type | Price per LCU (Unit: USD/hour/transit router) | LCU defintion |

| Application Load Balancer | 0.007 | 1 ALB LCU = 25 new connections per second 3,000 concurrent connections (sampled every minute) 1 GB of data transfer processed per hour 1,000 rules processed per hour |

| Network Load Balancer | 0.005 | 1 LCU = For TCP: 800 new connections per second 100,000 concurrent connections (sampled every minute) 1 GB of data transfer processed per hour For UDP: 400 new connections per second 50,000 concurrent connections (sampled every minute) 1 GB of data transfer processed per hour For SSL over TCP: 50 new connections per second 3,000 concurrent connections (sampled every minute) 1 GB of data transfer processed per hour |

| Classic Load Balancer | 0.007 | 1 LCU = For TCP: 800 new connections per second 100,000 concurrent connections (sampled every minute) 1 GB of data transfer processed per hour For UDP: 400 new connections per second 50,000 concurrent connections (sampled every minute) 1 GB of data transfer processed per hour For HTTP/HTTPs: 25 new connections per second 3,000 concurrent connections (sampled every minute) 1 GB of data transfer processed per hour 1,000 rules processed per hour |

With these different type of load balancers, users can choose the correct type of instance and maintain high performance and high availability while availing usage based pricing.

This blog has also been published on Alibaba Cloud Blog site here.

Cover photo image: https://unsplash.com/