As you dive deeper in the world of containers, microservices and kubernetes, there are more technologies, tools and concepts that come up frequently in the discussion which didn’t exist a few years back. In one conversation on cloud native solutions, you will hear a number of new terms like knative, fluentd, fargate, helm etc and this signifies the pace at which the ecosystem is evolving. One such popular concept is Service Mesh which has now become a standard component of any microservices and container based architecture. Istio is pretty much the industry standard when it comes to service mesh and is an important tool to understand for anyone interested in cloud native solutions. However, before we understand what Istio is, it is important to grasp the need for a service mesh.

Service Mesh is a solution to manage communication between different microservices in a microservices architecture. Communication between various microservices happened before arrival of service mesh also, but with increasing number of microservices, it is prone to a few challenges.

Inter-communication

Imagine an e-commerce organization that has migrated their system from monolithic setup to microservices architecture deployed in a kubernetes cluster. Now, there are different microservices namely the front end web server, payment service, shopping cart service, inventory service, backend database etc. When a user logs into the website, selects a product and purchases it, all the above services end up communicating with each other. The control goes from web server to inventory, to shopping cart, to payment and futher back and forth as the user explores the website. This means all the inter-communicating services need to have endpoints configured for each other. This would require the configuration to be done in all the microservices and every time a new service is added it needs to have the configuration for endpoints set up in it.

Security Logic

Typically in a kubernetes cluster deployment a firewall is used in front of the cluster. This restricts unauthorized access to the services inside. But once you enter the cluster there is no second level of security between services. Communication between different microservices happen freely over unsecured protocol like http and this means if one service is hacked inside the cluster, then the other services can also be accessed as an individual service does not have any security setup. If the user wants to secure individual services, then security logic needs to be written for every microservice.

Retry logic

There could be times when the service might fail or take an exceptionally long time to respond to a request. In these scenarios, the system should know when to abort the connection and retry the request. It also needs to know after how many retries to abandon the request and send back a suitable error message. This retry logic would be needed to be written in all the services.

Metrics and Tracing Logic

It is desirable to monitor the services for their performance. Monitoring logic to collect important metrics like requests per second, avg time per request etc needs to be written in each service for tools like prometheus. Similarly, services needs to collect tracing data using zookeeper (for eg) for analysis. All this logic needs to be included in each microservice.

Developers in the organization need to write all the above (and more) non-business logic for all the services, resulting in increased complexity for them, and not to mention, utilizing their precious time which otherwise could have been used in handling and writing key business logic of the core services.

Service Mesh

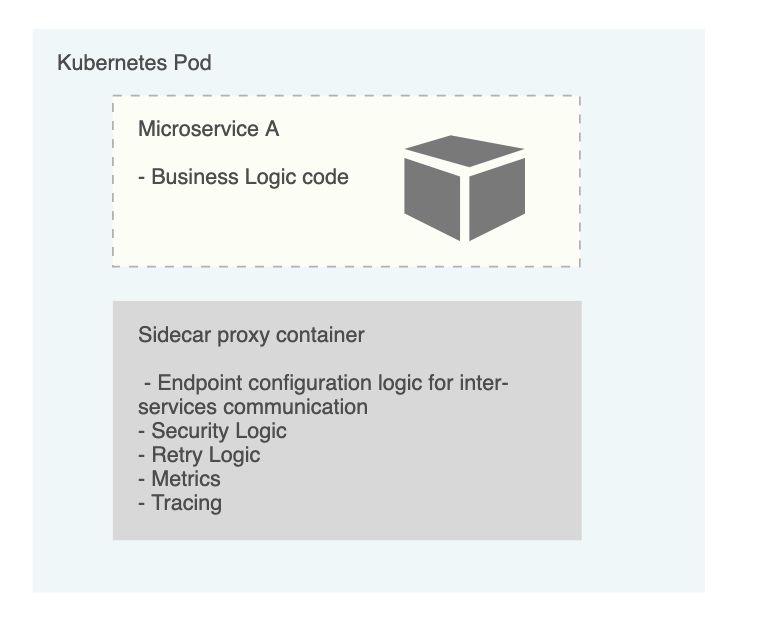

Service Mesh combines all the non business logic discussed above and handles it as a sidecar proxy in the service. This means that in a pod, there will be two containers – one for deploying business logic and another sidecar container which would handle all the other logic and would also act as a proxy for the pod. Service Mesh manages the sidecar proxy for all the services and enable deployment of these sidecars in an automated manner to all the services through a control plane component. This enables the team to focus on the business logic and also reduces the complexity of the architecture. These proxies in each service pod can talk to other proxies in other service pods thereby handling the communication between different services.

Istio

While service mesh is a concept, Istio is the implementation of this concept. Istio is very close to becoming the industry wide standard implementation of service mesh in the cloud native market. In Istio, the sidecar proxy is known as Envoy Proxy, while the control plane component is called Istiod. Earlier, the control plane component was divided into various components like Pilot, Galley, Citadel, Mixer for managing features like configuration, service discovery, certificate management etc. But Istio v1.5 onwards, all these components were merged into a single component called Istiod. Istiod also manages the definition and deployment of all the Istio components and envoy proxies, and therefore, developers don’t have to modify their deployment and service YAML files of kubernetes. This results in clear separation of istio configuration from application configuration, resulting in increased efficiency.

source: istio.io

Enabling inter-service communication using Istio

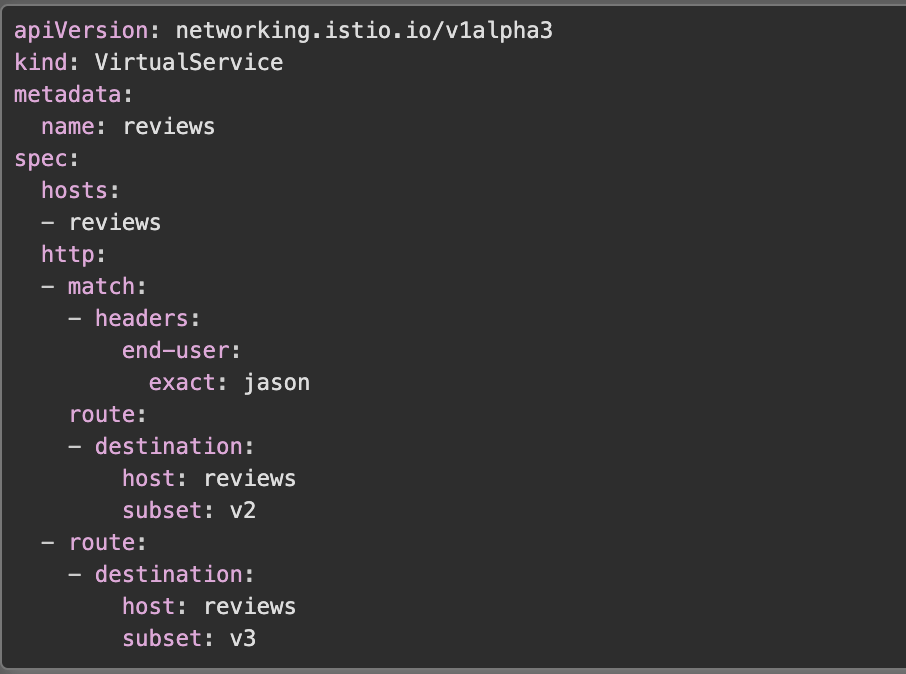

In order to enable communication logic between different microservices, we need to write Custom Resource Definition files (CRDs). CRDs are YAML files written in language and syntax of kubernetes (since it integrates with Kubernetes API), which dictate the behaviour of Istio components. In order to manage inter service communication, we need to write two CRDs:

VirtualService: This YAML file defines how to route the traffic to a particular service.

Source: istio.io

DestinationRule: Once the traffic has reached the destination service, this YAML file defines the actions that the destination service needs to take with the incoming traffic.

my-svc destination service, with different load balancing policies. Round Robin policy for v2, and Random policy for other versions.Source: istio.io

Istio features

Traffic Split: Using Istio you can split traffic into various versions of your service. This can help in testing the new version of a service with a limited amount of traffic, and if the service works as expected, the traffic can be slowly shifted from older version to newer. This method of deploying application is called canary deployment.

Service Discovery: Istio has an internal dynamic registry for services and its endpoints. This means that users dont have to manually add endpoints in the registry, and as soon as a new microservice is deployed, its endpoints are automatically added to the registry.

Certificate Management: Istiod also acts as a certificate authority. It can generate and validate certificate for all the microservices in order to establish secure TLS communication between the services.

istiod to automate key and certificate rotation at scale.Source: isito.io

Metrics and Tracing: Istiod collects telemetry data from envoy proxies of all the microservices, which then can be used for application monitoring and analysis.

Istio Ingress Gateway: Istio also has its own ingress controller which can be used on top of all the microservices deployed. The ingress controller acts as a firewall and a load balancer for incoming traffic. Whenever the ingress controller receives traffic, it would direct the traffic to the corresponding service as per definition in its VirtualService YAML file.

ext-host.example.com into the mesh on port 443.Source: isito.io

Final : Istio traffic flow

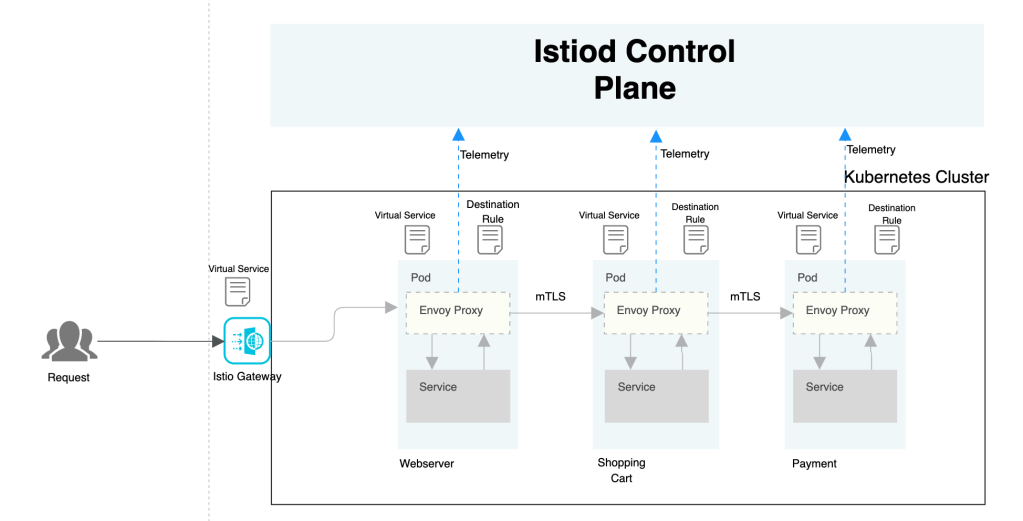

Once Istio is configured, the traffic in the setup flows as follows:

- User initiates request to the front end webserver in the microservices setup.

- The request lands on Istio Ingress Gateway where the gateway will evaluate the VirtualService rules to understand how and where to send the request.

- The request is then sent to the webserver pod where it first reaches the Envoy proxy sidecar container.

- Envoy proxy will evaluate the request and send it to actual webserver service container in the same pod using localhost.

- Post processing the request, the webserver might want to call the payment service, in which case it will send the request back to its Envoy proxy which will evaluate the VirtualService rules and DestinationRules and then forward the request to Envoy proxy container of Payment service pod using mTLS.

- This process repeats till the request is completely serviced and response is sent back to the user.

- While the request is serviced at each pod, Istiod control plane collects metrics data from each Envoy proxy which then can be used for monitoring and analysis purpose.

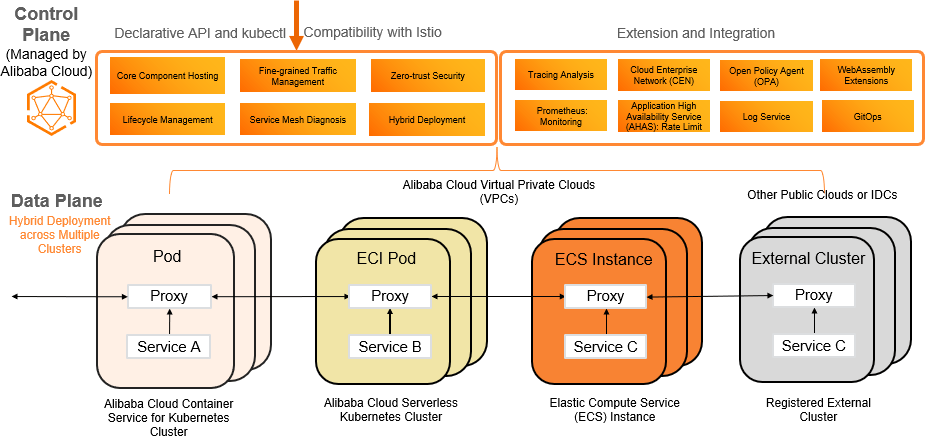

For setting up Istio in your environment, you can refer to Istio setup guide on the official documentation site. Service Mesh is now also available on cloud platforms as a managed service. You can take advantage of such managed services to reduce setup and management complexity of a service mesh. Alibaba Cloud Service Mesh is a great example of a managed service mesh solution on public cloud platform.

Alibaba Cloud Service Mesh (ASM) is a fully managed service mesh platform. ASM is compatible with the open source Istio service mesh of the Istio community. ASM allows you to manage services in a simplified manner. For example, you can use ASM to route and split inter-service traffic, secure inter-service communication with authentication, and observe the behavior of services in meshes. This way, you can greatly reduce your workload in development and O&M.